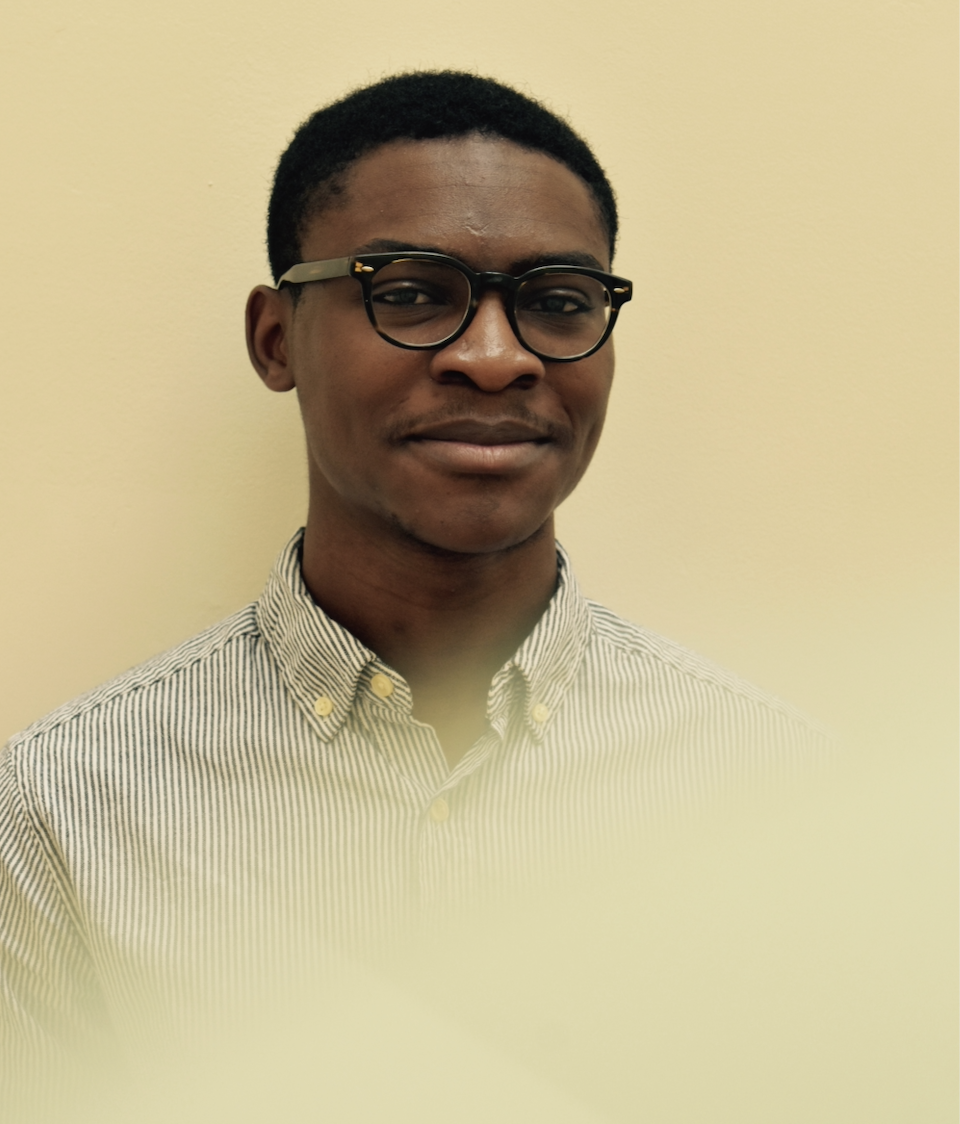

Daniel G. Alabi

Short Bio

I am an assistant professor in the Electrical and Computer Engineering Department at the University of Illinois at Urbana-Champaign. Until June 2025, I was a postdoctoral researcher at Columbia University and a junior fellow in the Simons Society of Fellows. I received my S.M. and Ph.D. degrees from Harvard University. Also, I am the president and co-founder of NaijaCoder Inc.

Research Interests

Keywords: privacy, information theory (classical and quantum), cryptography, AI/ML security.

My primary research interests span the theoretical aspects of private and secure communication. I also study the resulting tradeoffs in computational and statistical resources. Example computational resources include communication, randomness, time, memory, and parallelism. Example statistical resources include samples drawn from an unknown distribution, as well as labeled examples used for classification or regression.

Courses

- Instructor, CS/ECE 598, Topics in Information-Theoretic Cryptography, Fall 2025, Fall 2026

- Co-Instructor, CS/ECE 374, Intro to Algorithms & Models of Computation, Spring 2026

- Co-Instructor, NaijaCoder, Summer 2022 & Summer 2023 & Summer 2024 & Summer 2025

My Office

1308 W Main Street MC 228

Urbana, IL 61801